In an era where artificial intelligence increasingly shapes our social and political landscapes, the line between innovation and manipulation grows ever thinner. As AI tools become more complex, so do the ways they can be misused to sway public opinion, influence elections, and undermine democratic processes. Navigating this complex terrain requires not only technological awareness but also a sharp understanding of the legal challenges involved. In this listicle,we explore **8 Legal Issues in AI Misuse for Political Manipulation**-shedding light on the critical legal gray areas,potential liabilities,and regulatory hurdles. Whether you’re a policymaker, legal professional, or simply a curious observer, this guide will equip you with essential insights into how the law confronts the evolving risks of AI-driven political influence.

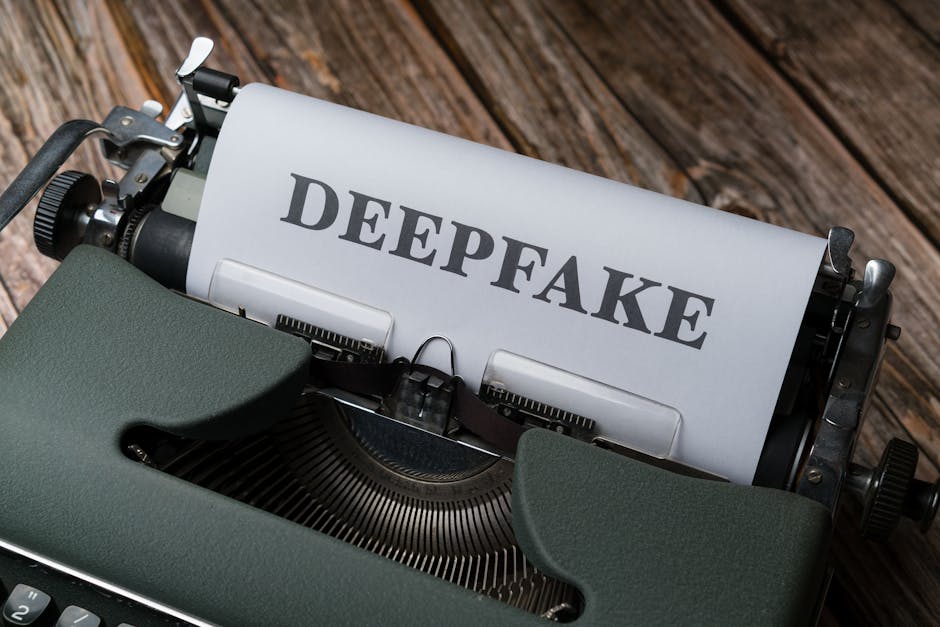

1) Deepfake Legislation Gaps: The rise of AI-generated deepfakes in political campaigns exposes significant loopholes in existing laws that struggle to address the malicious use of manipulated media for misinformation

Existing legislation frequently enough lags behind the rapid evolution of AI technology, leaving critical gaps that bad actors can exploit. Currently, many laws focus on traditional forms of defamation or fraud, but they lack the specific provisions necessary to tackle deepfake-generated content used in political contexts. This creates a gray area where manipulated videos and audio can be disseminated with little legal result, fueling misinformation and eroding public trust. without explicit legal definitions, authorities struggle to identify and prosecute those responsible for creating and distributing these synthetic media pieces.

| Legal Gaps | Impact |

|---|---|

| Vague definitions of “manipulated media” | Difficulty in attribution and enforcement |

| Lack of specific penalties for deepfake creation | Reduced deterrence for malicious actors |

| Limited cross-jurisdictional cooperation | Challenges in international enforcement |

As lawmakers grapple with these gaps, the risk remains that regulations become outdated before they can adapt, allowing malicious campaigns to thrive undetected. Bridging these gaps requires not only clear legal definitions and penalties but also the integration of technological safeguards that can detect and flag AI-manipulated content in real time. Without proactive legislative evolution, the potential for deepfakes to distort political discourse continues to grow unchecked.

2) Data Privacy Violations: Political entities leveraging AI to micro-target voters often engage in unauthorized data harvesting, raising critical concerns about consent and the unlawful exploitation of personal information

In the race to personalize political messaging, some organizations cross ethical boundaries by secretly collecting vast amounts of personal data without explicit consent. This covert harvesting typically involves scraping social media profiles, analyzing online behaviors, and exploiting third-party databases, creating a shadow ecosystem of voter information that lacks openness. The result is a landscape where individuals’ digital footprints are mined relentlessly, frequently enough without understanding the extent to which their private lives are under scrutiny.

Such practices not only threaten individual privacy rights but also pose significant legal challenges:

- Unlawful Data Collection: Extracting data without proper authorization violates existing privacy laws in many jurisdictions.

- Consent Violations: Micro-targeting campaigns frequently bypass explicit consent, raising questions about user rights and agency.

- Data Monetization Risks: Personal information is often sold or shared with third parties, amplifying privacy breaches.

| Violation Type | Potential Penalty | Impact |

|---|---|---|

| Unauthorized Harvesting | Fines & sanctions | Loss of public trust |

| Consent Breaches | Legal lawsuits | Reputational damage |

| Data Sharing | Regulatory scrutiny | Operational restrictions |

3) Algorithmic Transparency and accountability: The opaque nature of AI algorithms used in political messaging challenges legal frameworks designed to ensure fair and accountable communication in democratic processes

One of the most pressing issues with AI in political contexts is the **lack of transparency** in how algorithms shape messaging. Many AI systems operate as “black boxes,” making it challenging for regulators, watchdogs, or the public to understand how decisions are made or which data influences specific outputs. This opacity undermines the foundations of democratic accountability, where clarity and oversight are essential for ensuring fair communication. Without clarity on the inner workings, it becomes nearly impossible to identify biases, rectify misinformation, or hold actors accountable for the misuse of AI-driven tactics.

Moreover, the absence of **standardized frameworks** for auditing and verifying AI algorithms exacerbates these concerns. Policymakers face challenges in establishing effective legal oversight as they lack the tools to scrutinize and evaluate proprietary or complex models.

Potential risks include:

- Unintended manipulation through biased algorithms

- Difficulty in tracing causality behind political messaging

- Challenges in enforcing fairness and preventing discriminatory practices

| Aspect | Issue |

|---|---|

| Opacity | Black-box algorithms hinder accountability |

| Standards | Lack of uniform guidelines for AI audits |

| Impact | Hinders fair electoral processes |

4) Election Interference and Cybersecurity: The deployment of AI tools to disrupt electoral systems or spread disinformation tests the limits of laws aimed at safeguarding election integrity against modern cyber threats

Artificial intelligence-equipped tools have opened a Pandora’s box for election security.Malicious actors can craft sophisticated disinformation campaigns, leveraging deepfake technology and AI-generated content to sway public opinion or create chaos. Legal frameworks struggle to keep pace with these rapid technological advances,often lacking clear guidelines on accountability and methods for detection. This vulnerability forces election regulators and cybersecurity experts into an ongoing game of catch-up, where layered cyber threats evolve faster than existing laws can address them.

To confront these challenges, some regions are considering new legislative measures that target the use of AI in election interference.Potential regulations include stricter controls on AI-generated media,mandatory transparency disclosures for political content,and enhanced cybersecurity protocols for electoral infrastructure.Here is a quick overview:

| Action | Goal |

|---|---|

| AI detection tools | Identify deepfakes and synthetic content |

| Transparency laws | Require disclosure of AI use in political messaging |

| Cybersecurity upgrades | Protect electoral systems from malicious invasion |

legal standards”>

legal standards”>

5) Defamation and libel Through AI content: Automated generation and dissemination of false or misleading political statements blur the lines of responsibility, complicating defamation claims under current legal standards

Automated AI-generated content allows for the rapid proliferation of false or misleading political statements, making it increasingly difficult to assign responsibility. When an AI synthesizes and spreads defamatory remarks about individuals or groups, identifying the true source becomes a complex puzzle-frequently enough leaving victims without clear recourse. This technological veil challenges traditional legal frameworks, which rely on proving intention and culpability, and raises questions about accountability in the digital age.

Moreover, the ambiguity surrounding AI authorship complicates libel claims, as legal standards for defamation are designed around human actors. Potential defense mechanisms like deniability or automated content generation under institutional control blur distinctions of responsibility. A hypothetical scenario might involve a malicious actor using AI to generate damaging falsehoods that are then shared across platforms, leaving victims and authorities grappling with whether the AI’s creator, the platform hosting the content, or the end-user can be held liable.This evolving landscape demands innovative legal strategies to uphold accountability without stifling technological progress.

6) Manipulation of Social Media Platforms: AI-driven bots and coordinated inauthentic behavior used to skew public opinion confront regulatory systems trying to balance freedom of expression with harmful manipulation

Artificial intelligence has empowered malicious actors to deploy sophisticated bots and coordinated campaigns that mimic genuine human activity online. These virtual puppeteers flood social media platforms with disinformation, fake accounts, and manipulated content designed to sway public opinion or erode trust in institutions. The challenge for regulators lies in distinguishing between authentic expression and inauthentic influence, especially as these AI-driven tactics evolve rapidly, making static policies quickly outdated. The blurred line between free speech and harmful deception demands a nuanced approach that can adapt to the speed of technological innovation while safeguarding democratic values.

attempts to curb such manipulation often create a complex regulatory maze, as platforms are caught between upholding freedom of expression and preventing abuse. Coordinated inauthentic behavior can distort election outcomes, foment social division, and undermine public confidence. Regulatory systems are experimenting with measures like transparency mandates,real-time content monitoring,and AI detection tools; though,the pervasive use of AI to craft convincing yet deceptive content continues to challenge enforcement efforts. Developing legal frameworks that can navigate this digital minefield remains one of the most pressing issues in safeguarding fair political processes.

| Tool | Purpose | Challenge |

|---|---|---|

| AI Bots | Fake engagement & information spread | Detecting authenticity in real-time |

| Deepfake Videos | Fake yet convincing visual content | Preventing malicious misinformation |

| Automated Commenting | Amplify messages & create echo chambers | identifying coordinated campaigns |

7) Intellectual Property Infringement in Political AI Tools: the use of copyrighted material without permission in AI-generated political content poses challenges in enforcing intellectual property rights within turbulent digital landscapes

Key challenges include:

- Difficulty in tracking the original source of AI-generated content that infringes upon copyrights.

- Legal ambiguities around the fair use doctrine when AI synthesizes and disseminates political messages.

- Potential for significant legal repercussions for developers and users who overlook copyright protections in their AI training datasets.

| Aspect | Concern |

|---|---|

| Training Data | Using copyrighted political content without consent |

| Content Generation | Producing derivative political materials infringing rights |

| Legal Liability | Accountability for misuse or infringement |

8) International Jurisdictional Challenges: Cross-border AI-driven political manipulation creates complex legal dilemmas regarding jurisdiction and enforcement, as actions may violate multiple national laws simultaneously

The borderless nature of AI-driven political manipulation presents a tangled web for legal authorities. When malicious actors deploy AI tools across multiple jurisdictions, pinpointing responsibility and enforcing laws becomes a formidable challenge. Different countries frequently enough have divergent standards, regulations, and enforcement mechanisms, which can lead to conflicting legal outcomes. This creates a scenario where an action deemed illegal in one nation might be permissible or go unnoticed in another, complicating efforts to hold perpetrators accountable.

Key issues include:

- Jurisdictional overlap where multiple countries claim authority over a single act

- Inconsistent legal definitions of manipulation, misinformation, and interference

- Difficulty tracking and prosecuting cross-border operators exploiting legal loopholes

| Jurisdiction | Legal Challenge |

|---|---|

| Country A | Prohibits AI misuse but lacks enforcement resources |

| Country B | Allows certain propaganda tactics as free expression |

| International | Lacks unified legal framework for AI misconduct |

Wrapping Up

As the digital battleground of politics continues to evolve, the misuse of AI presents complex legal dilemmas that demand our attention. From misinformation campaigns to data privacy infringements, these eight legal issues underscore the urgent need for clear regulations and vigilant oversight. Navigating this uncharted territory won’t be easy, but understanding the challenges is the first step toward safeguarding the integrity of our democratic processes in an age where artificial intelligence wields unprecedented influence.